When using a High Performance Computing System you also might have run into the problem of how to get data in and out of this system.

We are very lucky in Australia to have CloudStor, which is Aarnets hosted OwnCloud version with 1TB of storage available for every researcher and we can use it to move data between different computing systems.

The only problem is that on an HPC you can’t open a browser and you also can’t install the owncloud client – so what other options exist?

There is a wonderful tool called rclone, which interfaces with all cloud storage providers out there 🙂

You can find a detailed description how to connect rclone to CloudStor, so let’s go through this step by step and set it up together:

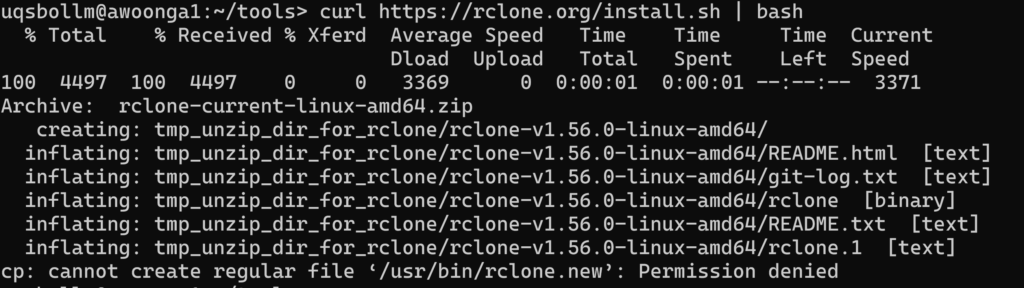

Connect to your favorite HPC and install rclone on there. The official install instructions for rclone are a bit misleading as we will not be able to run as sudo or use their install script without sudo or install rpm or apt packages – so if you try any of the official instructions on an HPC you will see errors similar to this:

Let’s install it in our home directory 🙂

cd

mkdir tools

cd tools

wget https://downloads.rclone.org/rclone-current-linux-amd64.zip

unzip rclone-current-linux-amd64.zip

rm rclone-current-linux-amd64.zip

cd rclone-*

echo "export PATH=\$PATH:$PWD" >> ~/.bashrc

source ~/.bashrc

rclone configCreate a new remote: n

Provide a name for the remote: CloudStor

For the “Storage” option choose: webdav

As “url” set: https://cloudstor.aarnet.edu.au/plus/remote.php/webdav/

As “vendor” set OwnCloud: 2

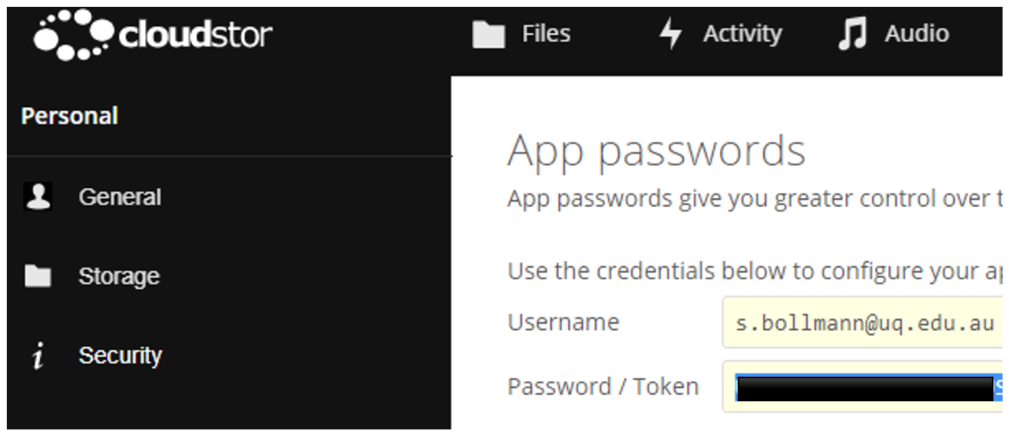

Set your CloudStor username after generating an access token:

Choose to type in your own password: y

Enter the Password / Token from the CloudStor App passwords page and confirm it again:

Leave blank the bearer_token: <hit Enter>

No advanced config necessary: <hit Enter>

accept the configuration: <hit Enter>

Quit the config: q

Now we can download data to the HPC easily:

rclone copy --progress --transfers 8 CloudStor:/raw-data-for-science-paper .or upload data to CloudStor:

rclone copy --progress --transfers 8 . CloudStor:/hpc-data-processedIf you need to upload lots of data, there is a very nice wrapper script from AARNET that tweaks a few settings and checks if all files have been transferred: https://github.com/AARNet/copyToCloudstor

git clone https://github.com/AARNet/copyToCloudstor

cd copyToCloudstor

./copyToCloudstor.sh ~/scratch/data/ CloudStor:/all-my-important-HPC-data-for-nature-paper

0 Comments